CONNECTING GOOGLE CLOUD PLATFORM(GCP) TO SNOWFLAKE: A STEP-BY-STEP GUIDE

Last updated: September 2024

Quick answer: To connect GCP to Snowflake, create a Google Cloud Storage (GCS) bucket, configure a Snowflake storage integration with your GCS service account, grant the Snowflake service account Storage Object Viewer permissions on the bucket, then set up Snowpipe with a GCS Pub/Sub notification channel for automated near-real-time data ingestion into Snowflake.

Introduction

Connecting Google Cloud Platform (GCP) to Snowflake enables scalable data warehousing with automated ingestion from Google Cloud Storage. Using Snowpipe and GCS Pub/Sub notifications, you can load data from GCS buckets into Snowflake tables in near real-time. This step-by-step GCP-to-Snowflake connection guide covers bucket setup, storage integration, IAM permissions, and Snowpipe configuration with screenshots.

Pre-requisites

- A Snowflake account with ACCOUNTADMIN or equivalent privileges.

- A GCP account with permissions to manage storage buckets, Pub/Sub, and IAM roles.( https://console.cloud.google.com/)

- Basic familiarity with SQL and cloud storage.

- A Snowflake account hosted on GCP (or supporting cross-cloud ingestion).

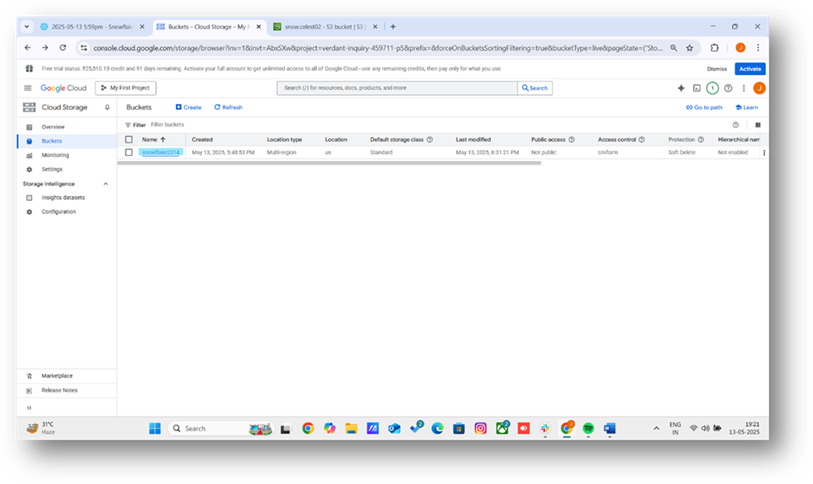

Step 1: Create a Google Cloud Storage Bucket

- Log into the GCP Console and navigate to Cloud Storage > Buckets.

- Click Create bucket, enter the name snowflake2214, and select a region compatible with Snowflake (e.g., us-central1).

- Keep default settings for storage class and access control, then click Create.

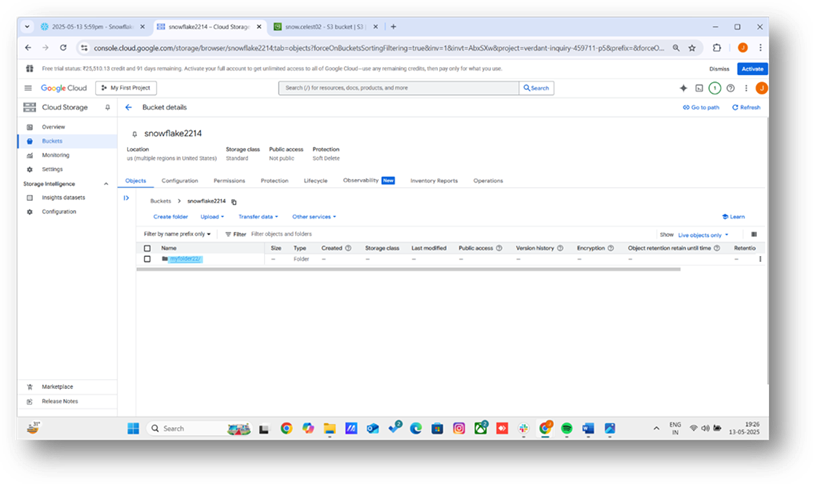

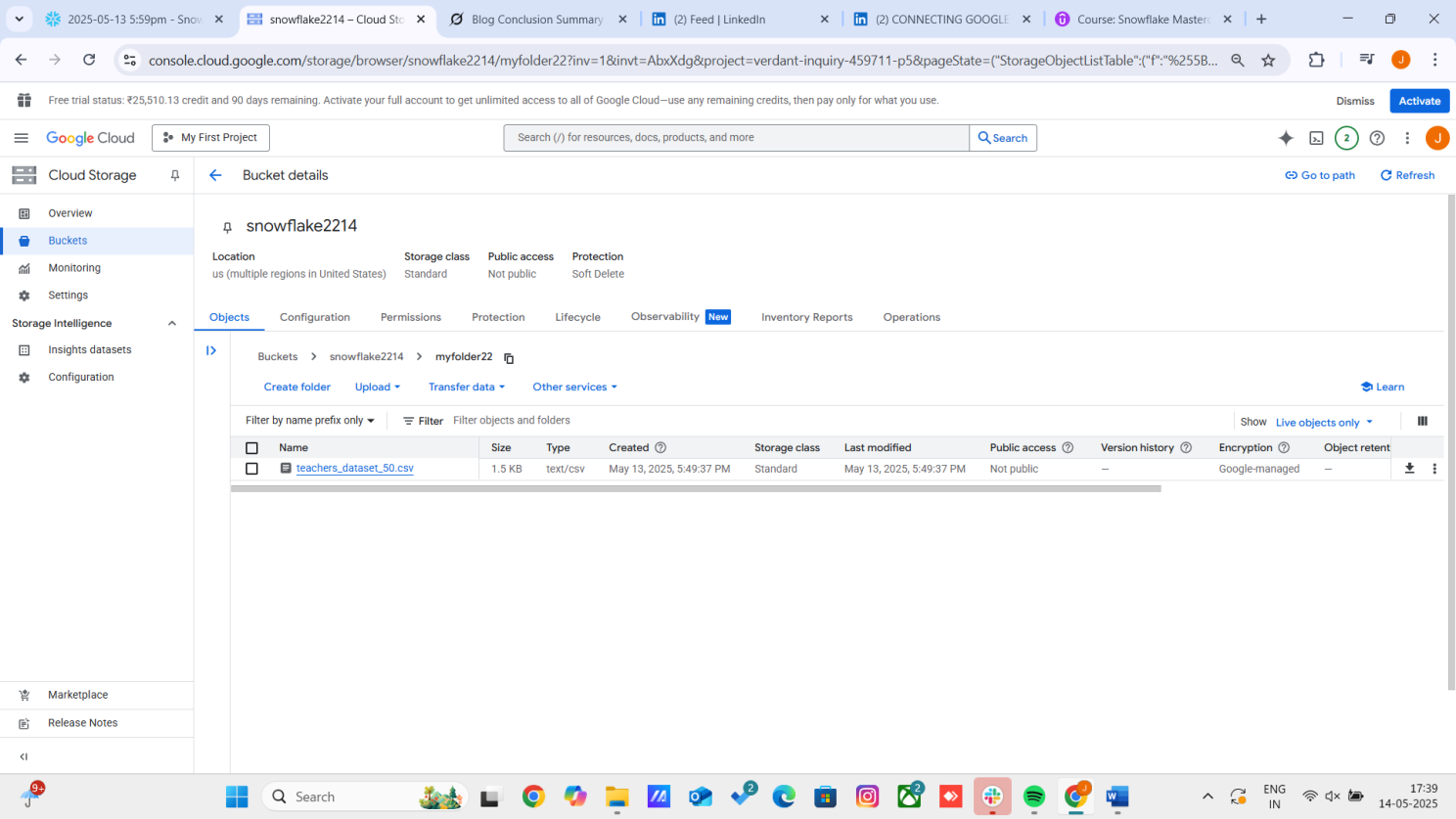

Step 2: Upload a Sample File to the Folder

- In the GCP Console, go to the snowflake2214 bucket.

- Create a folder named myfolder22 by clicking Create folder.

- Navigate to myfolder22 and click Upload files to add a sample CSV file (e.g., teachers.csv) with columns: teacher_id, name, gender, subject, experience. Example content:

- teacher_id,name,gender,subject,experience

- Confirm the file appears in snowflake2214/myfolder22.

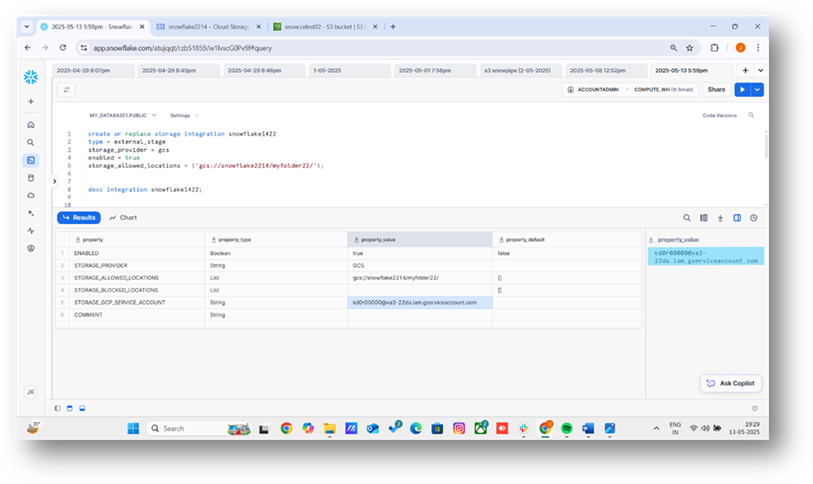

Step 3: Create a Storage Integration in Snowflake

- Open Snowflake’s web interface or SnowSQL command-line client.

- Run the following SQL to create a storage integration for GCS:

Syntax : (for creating strorage integration)

create or replace storage integration snowflake1422 --(integration name)

type = external_stage

storage_provider = gcs

enabled = true

storage_allowed_locations = ('gcs://snowflake2214/myfolder22/'); --(bucket name)

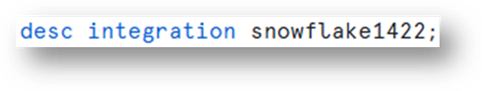

1. Retrieve the GCS service account ID

2. You can see the GCS code by running the below command.

Step 4: Grant Permissions in GCP

1. In the GCP Console, navigate to Cloud Storage > Buckets > snowflake2214.

2. Go to the Permissions tab and click Add.

3. Add the Snowflake service account from Step 3 as a principal.

4. Assign the role storage admin to allow Snowflake to read files in myfolder22.

5. Save the changes.

Step 5: Create a Stage and File Format in Snowflake

1. Create a file format for CSV files:

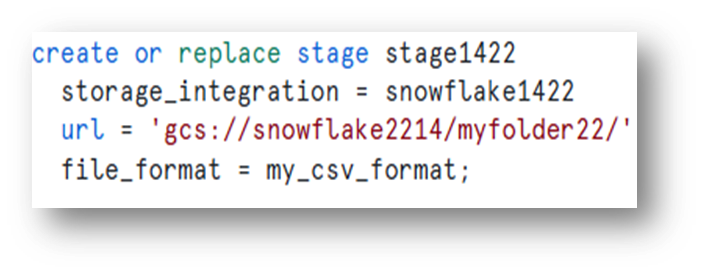

2. Create an external stage pointing to the GCS folder:

3. Show the stages:

Step 6: Run the Data by using Select command

1. Write the command with the identities of your table.

(ex. Teacher_id,name,section,subject…etc)

2. Run the command you’ll get your respective data which is in the google gcp.

Conclusion

Loading data from Google Cloud Platform to Snowflake using the SELECT command offers a streamlined and efficient approach to data integration. By leveraging Snowflake’s robust data handling capabilities and GCP’s scalable infrastructure, users can seamlessly transfer and transform data for advanced analytics. You can also load data from Azure Blob Storage into Snowflake using a similar storage integration approach. Once data is loaded, consider using dbt for ELT data transformations to model and clean your data at scale.

Frequently Asked Questions

Q: How do I connect GCP to Snowflake?

To connect GCP to Snowflake, create a GCS storage integration in Snowflake, set up a service account in GCP with appropriate permissions, create an external stage pointing to your GCS bucket, and use the COPY INTO command to load data.

Q: What GCP services work with Snowflake?

Snowflake integrates with Google Cloud Storage (GCS) for data loading and unloading. You can also leverage BigQuery for data sharing and Google Cloud Pub/Sub for event-driven data pipelines.

Q: Do I need a storage integration for GCP to Snowflake?

Yes. A storage integration is recommended for GCP to Snowflake connectivity. It provides secure, managed access to your GCS buckets without embedding credentials in SQL statements, using GCP service accounts for authentication.

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance