A Practical Guide to Talend’s tDBOutput ,tDBOutputBulk And tDBBulkExec Components :

Last updated: August 2024

Quick answer: Use tDBOutput for small-to-medium row-by-row inserts/updates/deletes with full DML control. Use tDBOutputBulk + tDBBulkExec together for high-volume bulk loading -- tDBOutputBulk writes data to a local file, then tDBBulkExec uses the database's native bulk loader (e.g., LOAD DATA INFILE for MySQL) to load it at 5-10x faster speeds than row-by-row inserts.

Introduction

Choosing between Talend's tDBOutput, tDBOutputBulk, and tDBBulkExec components is essential for building efficient ETL pipelines. tDBOutput handles row-by-row database operations, while the tDBOutputBulk + tDBBulkExec combination uses native database bulk loading for dramatically faster data ingestion. This component choice is a key part of Talend performance tuning. This guide compares all three Talend database output components with configuration examples and performance benchmarks.

tDBOutput

The tDBOutput component in Talend is used for performing traditional row-by-row database operations such as inserts, updates, or deletes. It is a straightforward way to write data directly into a database table as part of an ETL flow.

Features of tDBOutput:

- Supports various database operations such as Insert, Update, Insert or Update, and Delete.

- Processes data row-by-row moderately sized datasets.

- Offers batch size and commit frequency settings for performance optimization.

- Easy to configure and integrates with Talend’s row-based workflows.

Common Use Cases

Suitable for small to medium data loads requiring individual row processing or verification.

tDBOutputBulk

tDBOutputBulk is designed for bulk loading scenarios, where the goal is to move large volumes of data into a database quickly and efficiently. How tDBOutputBulk Works: Instead of writing rows directly, it writes the data as a bulk file (e.g., a CSV file) formatted specifically for the target database’s bulk loading utilities. This intermediary file is then loaded into the database using a separate component like tDBBulkExec or an external bulk load utility. The bulk load process reduces overhead by minimizing direct database interactions during every row insert. Key Advantages: Significantly faster than row-by-row inserts for very large datasets (millions of rows). Reduces load on the database and network by using bulk loading mechanisms.

tDBBulkExec:

tDBBulkExec is a Talend component that loads data into a database using the database’s native bulk load utility.

It is usually paired with tDBBulkOutput:

- tDBBulkOutput → Creates a bulk (CSV/delimited) file.

- tDBBulkExec → Executes the DB-specific bulk load command to insert that file into the target table.

Examples:

- MySQL → uses LOAD DATA INFILE

- Oracle → uses SQL*Loader

- SQL Server → uses BULK INSERT

Now, execute the job in Talend Studio using these components.

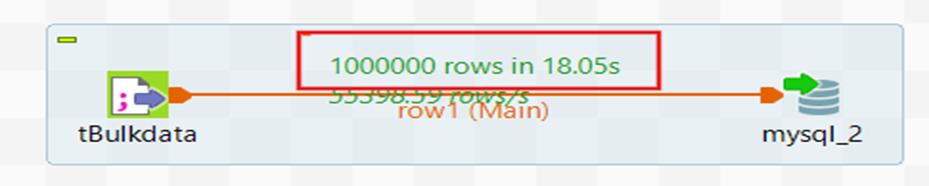

With tDBoutput:

Let us take an input file with one million records and load it using tDBOutput.

Here it takes 18 seconds to load the data using tDBOutput.

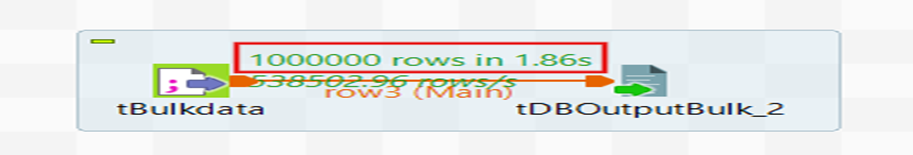

With tDBOutputBulk:

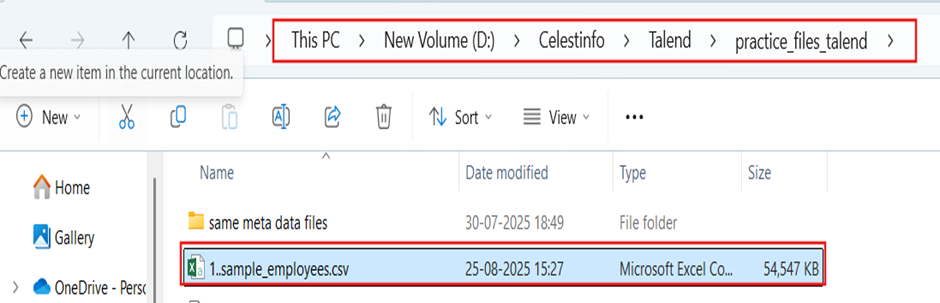

Use the same input file and load it using tDBOutputBulk.

Using tDBOutputBulk, loading the data takes 1.86 seconds.

Here the tDBOutputBulk component writes incoming data to a file.

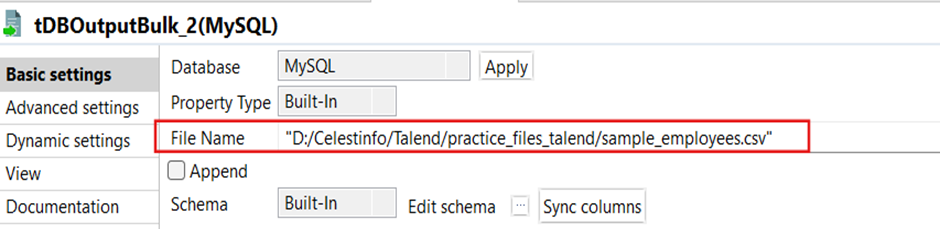

Here we give the file path to store that bulk-file.

Now the bulk-file is stored in the desired location.

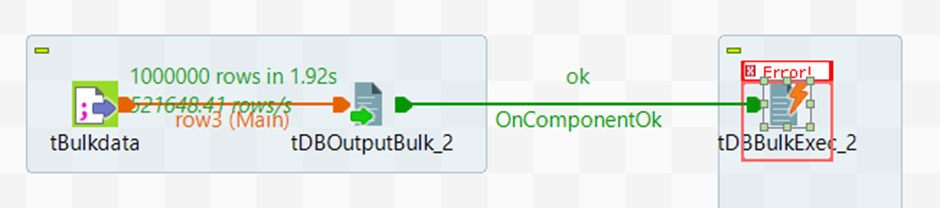

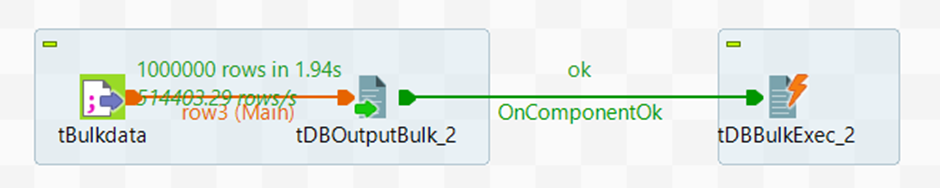

Now run the job with tDBBulkExec.

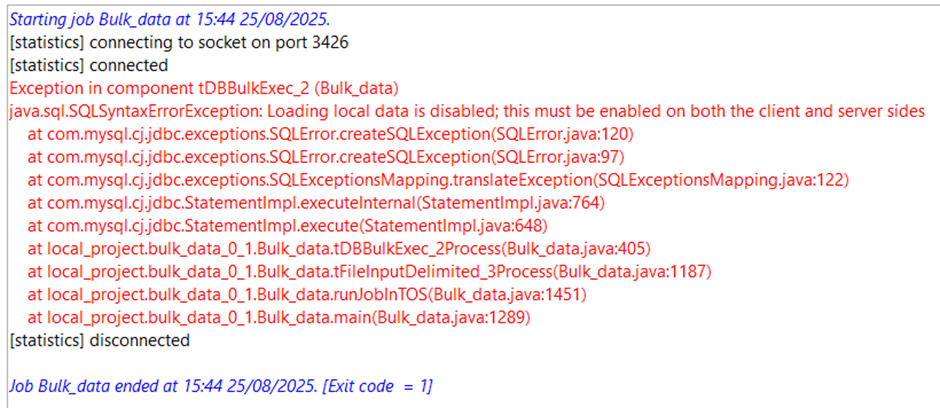

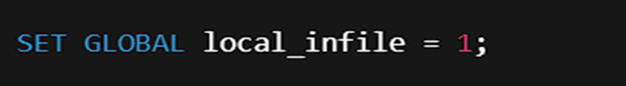

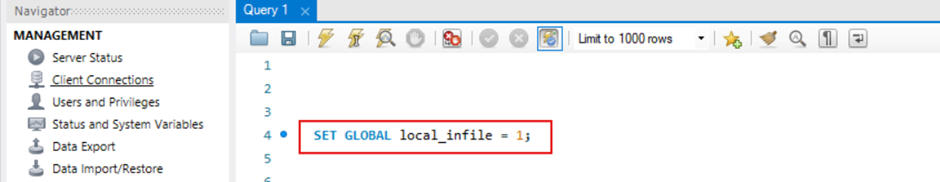

This error occurs on the MySQL side because MySQL is not configured to load data from local files. To fix this, you need to do:

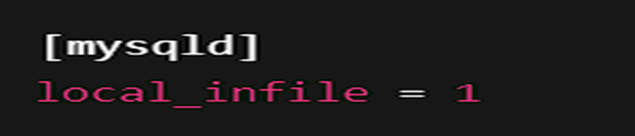

The SET GLOBAL local_infile = 1; setting is temporary and will reset after MySQL restarts. This mode must be enabled on both server and client sides to allow file-based data loading via SQL statements like LOAD DATA LOCAL INFILE. To make it permanent, add this line under the [mysqld] section of your MySQL configuration file (my.cnf on Linux or my.ini on Windows).

Then restart MySQL service. This way, tDBBulkExec (or any LOAD DATA LOCAL INFILE command) will always work without needing to re-run the SQL command.

Now run the job again.

The job executes successfully and loads all the data into the database.

| Feature | tDBOutput | tDBBulkOutput |

|---|---|---|

| Purpose | Writes data directly into the database table row by row | Writes data into a flat file (CSV/Delimited) for bulk load |

| Insert Method | Executes SQL statements (INSERT, UPDATE, DELETE) for each row | Dumps rows into a file, no direct DB insert |

| Performance | Slower for large volumes (millions of rows) because of row-by-row DB calls | Much faster as it just writes to a file |

Conclusion

Choosing the right component for writing data to a database in Talend depends on your data volume and performance requirements. For small to medium datasets that require row-level operations like inserts, updates, or deletes, tDBOutput is the straightforward choice. For large-scale data loads involving millions of rows, the combination of tDBOutputBulk and tDBBulkExec offers significantly better performance by leveraging the database's native bulk loading utilities. Be mindful of heap memory constraints when processing large volumes. For a complete dynamic ETL approach using tJava and JDBC, see our dynamic MySQL ETL guide.

Frequently Asked Questions

Q: What is the difference between tDBOutput and tDBOutputBulk in Talend?

tDBOutput writes data directly into a database table row by row using SQL statements (INSERT, UPDATE, DELETE), making it suitable for small to medium datasets. tDBOutputBulk writes data to a flat file (CSV or delimited format) designed for the database's native bulk loading utility, making it significantly faster for large datasets with millions of rows.

Q: When should I use tDBBulkExec in Talend?

tDBBulkExec is used after tDBOutputBulk to load the generated bulk file into the database using the database's native bulk load utility (for example, LOAD DATA INFILE for MySQL, SQL*Loader for Oracle, or BULK INSERT for SQL Server). Use this combination when you need to load large volumes of data quickly.

Q: Why does tDBBulkExec fail with a local_infile error in MySQL?

This error occurs because MySQL is not configured to load data from local files by default. To fix it, run SET GLOBAL local_infile = 1 in MySQL. To make it permanent, add local_infile=1 under the [mysqld] section of your MySQL configuration file (my.cnf on Linux or my.ini on Windows) and restart the MySQL service.

Related Articles

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance