How to Create a Data Pipeline in Azure Data Factory (ADF): A Complete Guide

Last updated: December 2024

Quick answer: To create a data pipeline in Azure Data Factory (ADF), set up a Storage Account and upload your source data, create Linked Services for both your source (Blob Storage) and sink (Azure SQL Database), define Datasets for input and output, then build a pipeline with a Copy Activity that maps source columns to destination columns. Trigger the pipeline and monitor execution in ADF’s Monitor tab.

Azure Data Factory (ADF) is Microsoft’s cloud-based data integration service for building automated data pipelines at scale. Whether you need to copy files from Blob Storage to Azure SQL Database or orchestrate complex ETL processes, ADF provides a visual, code-free interface to create, schedule, and monitor data pipelines. For more advanced ADF patterns, see our guide on building a metadata-driven ADF pipeline from REST API to Snowflake.

In this step-by-step guide, you will learn how to build a data pipeline in ADF from scratch, covering storage account setup, linked services, datasets, pipeline creation, and monitoring.

Pre-requisites

Before starting, make sure you have:

- An Azure account

- A Data Factory created

- A CSV file stored in Azure Blob Storage

- An Azure SQL Database with a table ready to receive data

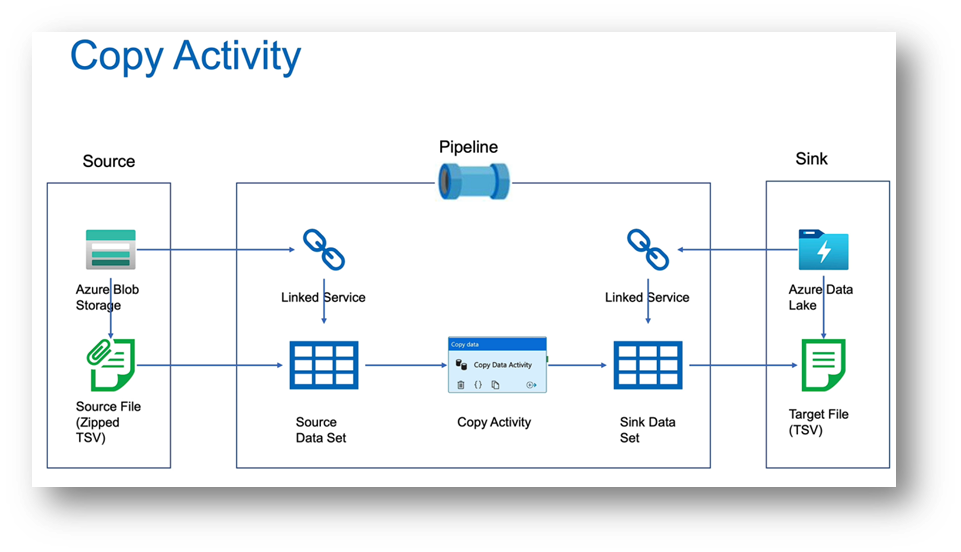

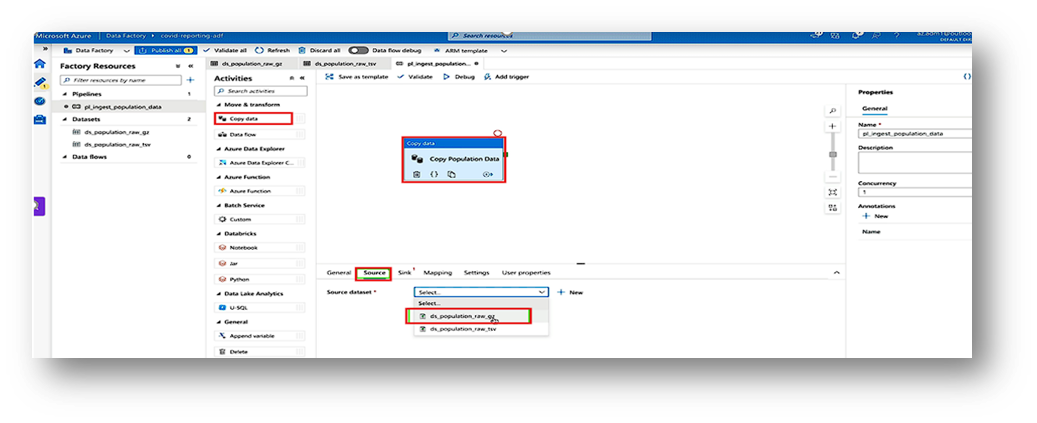

The image below describes the copy activity in the pipeline.

This is the basic structure for creating any pipeline.

We will perform the following steps in detail through hands-on practice.

Steps to Create the Pipeline

Step 1: Create a Storage Account

You need a storage account to hold your data files.

- Log in to the Azure Portal.

- Click Create a resource → search for Storage account → click Create.

- Fill in the following details:

- Resource Group: Choose or create a new one.

- Storage account name: Example - mystorage123 (must be unique).

- Region: Choose your region.

- Performance: Standard is fine.

- Redundancy: Locally-redundant (LRS) is enough for learning.

- Click Review + Create → Create.

- Once deployed, open your storage account.

- Go to Containers → + Container → give a name (like inputdata) → set public access to Private → Create.

Step 2: Create a Data Factory

- Log in to the Azure Portal.

- Click Create a resource → search for Data Factory.

- Fill in the details (Name, Region, Resource Group, etc.).

- Click Review + Create.

Click the create button here,

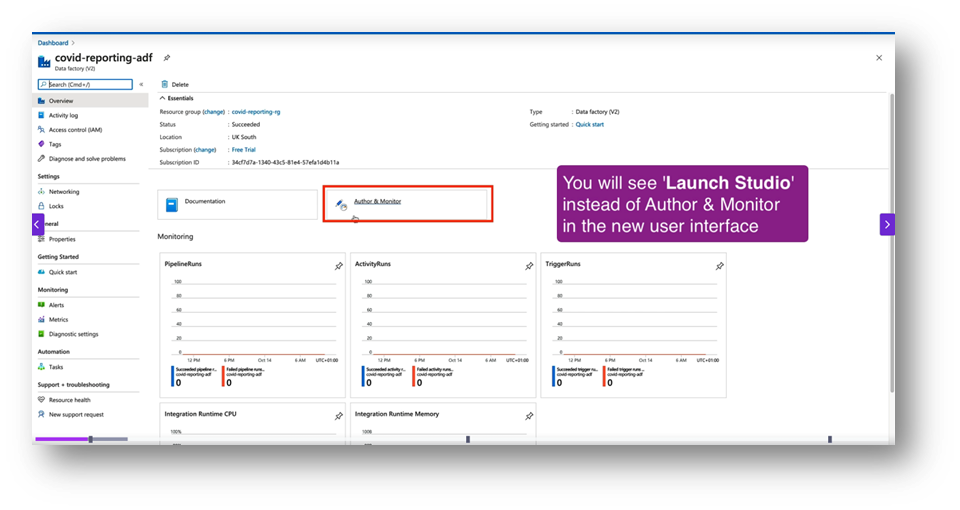

Step 3: Open ADF Studio

- Once created, go to the resource → click Author & Monitor. This opens the ADF Studio.

Step 4: Create Linked Services

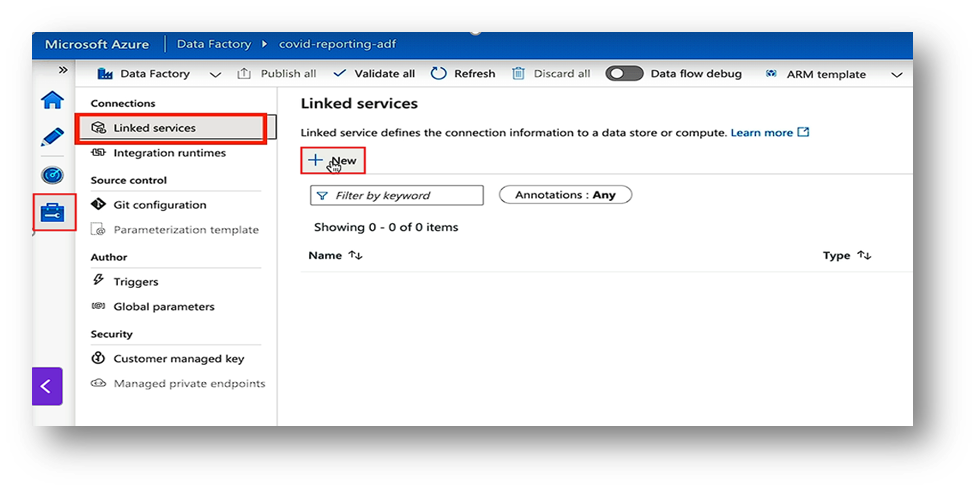

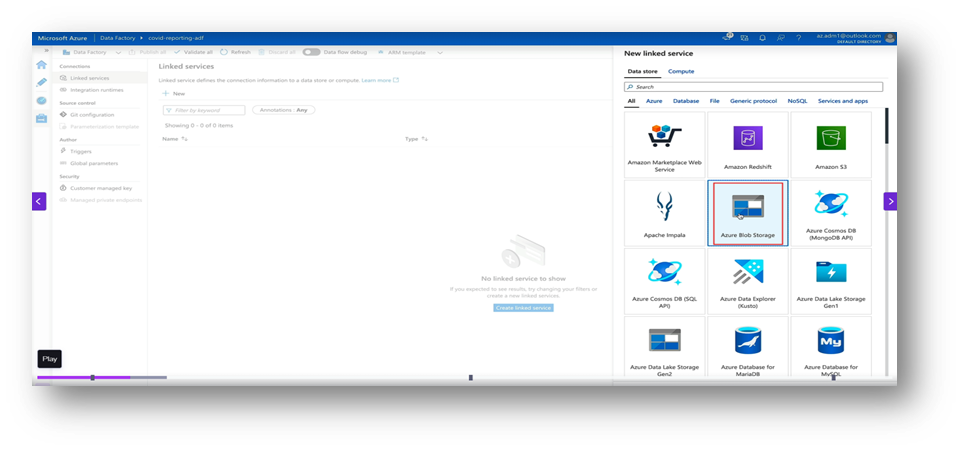

Linked Services are your connections.

- Blob Storage: Go to Manage → Linked Services → New → Azure Blob Storage. Add connection details and test it.

- For the sink, also use blob storage or something like a SQL database.

- Now create a new linked service same as the previous one for the sink.

- Blob Storage: Go to Manage → Linked Services → New → Azure Blob Storage. Add connection details and test it.

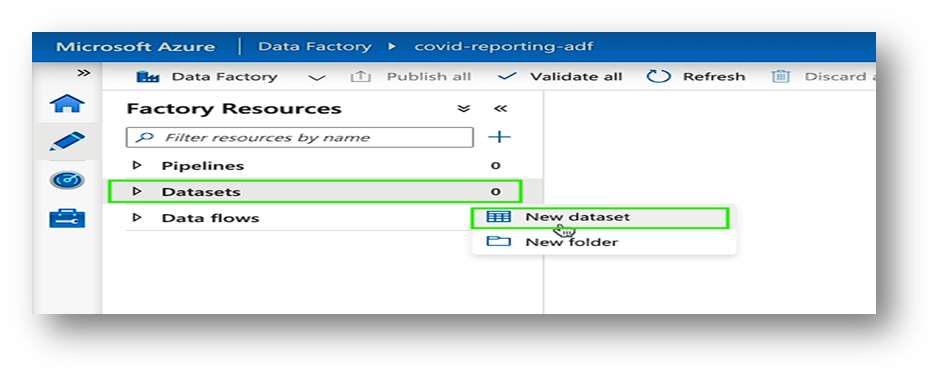

Step 5: Create Datasets

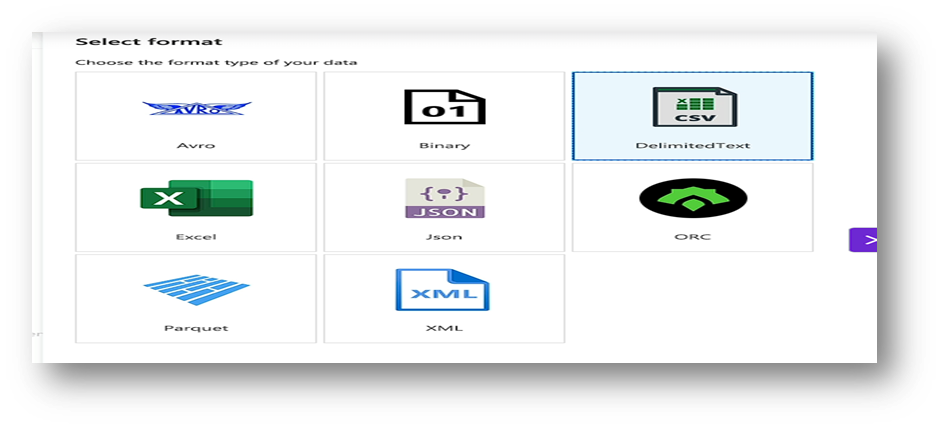

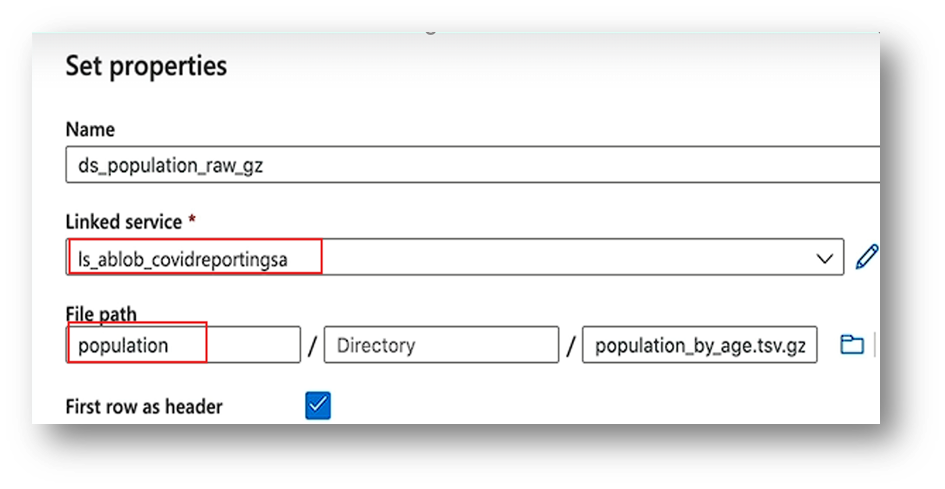

Datasets describe your data.

- Source Dataset (Blob): Choose DelimitedText → link to your Blob container → select your CSV file.

- Sink Dataset (Blob): Choose DelimitedText → link to your Blob container → select your CSV file.(same as previous dataset).

Step 6: Build the Pipeline

- Go to Author → Pipelines → New Pipeline.

- 2. Drag a Copy Data activity to the canvas.

- In Source, choose your Blob dataset.

- 4. In Sink, choose your Blob dataset.

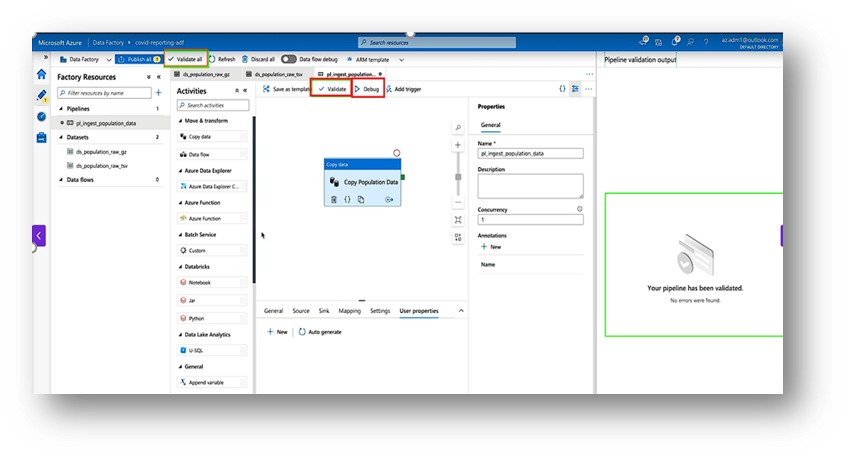

Step 7: Test and Run

- Click Validate and Debug to test the pipeline.

- Once it works, click Add Trigger → Trigger Now to run it.

Step 8: Monitor the Pipeline

- Go to the Monitor tab.

- You can see each run’s status, duration, and errors (if any).

Conclusion

Azure Data Factory (ADF) is a powerful and easy-to-use cloud service for building data pipelines. It allows you to connect different data sources and move data efficiently without complex coding. You can design, schedule, and monitor pipelines visually in a single platform. With ADF, automating data movement and transformation becomes fast and reliable. It supports both cloud and on-premises data sources seamlessly. Whether it’s daily data refresh or real-time integration, ADF handles it all. In short: Azure Data Factory is your one-stop solution for creating efficient, reusable, and automated data pipelines in the cloud. To extend your ADF pipelines with Fabric and Snowflake integration, see our guide on Azure SQL to Snowflake via Fabric and OneLake.

Frequently Asked Questions

What is Azure Data Factory used for?

Azure Data Factory (ADF) is a cloud-based data integration service that allows you to create, schedule, and manage data pipelines for moving and transforming data across various sources and destinations.

Do I need coding skills to create a pipeline in ADF?

No, ADF provides a visual drag-and-drop interface in ADF Studio that lets you build pipelines without writing code. However, you can use expressions and custom scripts for advanced transformations.

What are Linked Services and Datasets in ADF?

Linked Services define the connection information to data stores (like Azure Blob Storage or SQL Database), while Datasets represent the structure and location of data within those stores that your pipeline reads from or writes to.

How can I monitor my ADF pipeline runs?

ADF provides a built-in Monitor tab where you can view pipeline run history, check status and duration, inspect activity-level details, and troubleshoot any errors that occurred during execution.

Still have questions?

Get AssistanceReady? Let's Talk!

Get expert insights and answers tailored to your business requirements and transformation.

Get Assistance